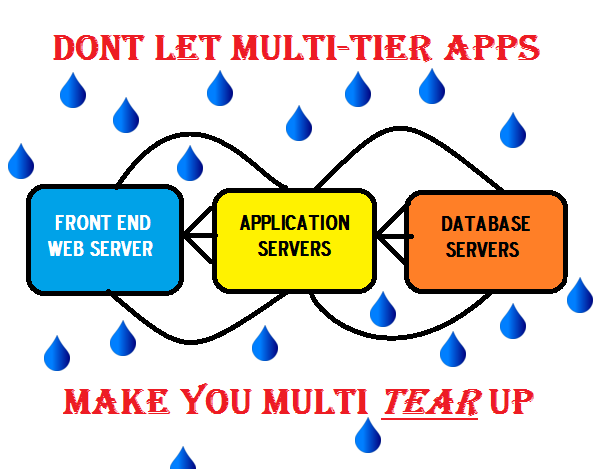

Problem to Solve – My company uses a Multi-Tier ERP application that is critical to our business. Service degradations and outages are extremely visible to executive management and costly to our bottom line. How can we reduce the time to triage and restore services?

I would estimate that 95% of my customers ask me how to solve performance issues in a Multi-Tier application environment. Obviously, multi-tier applications are not exclusive to ERP deployments and are common across many different verticals including patient care, banking, manufacturing, retail/e-commerce, public utility, etc. What frustrates my customers “the most” is the time it takes to identify the area “within the multi-tier application” that is causing the issue. Ok, maybe not to the point of “tears”, but certainly to the point of frustration. Contributing to the frustration is that issues with a multi-tier application almost always coincide with some level of executive interaction, a subsequent war room (link to my article on War Room ), and a negative affect on your company’s overall business. The negative effects can impact a wide range of things including revenue, productivity, customer loyalty, product shipment, critical services, etc.

I would estimate that 95% of my customers ask me how to solve performance issues in a Multi-Tier application environment. Obviously, multi-tier applications are not exclusive to ERP deployments and are common across many different verticals including patient care, banking, manufacturing, retail/e-commerce, public utility, etc. What frustrates my customers “the most” is the time it takes to identify the area “within the multi-tier application” that is causing the issue. Ok, maybe not to the point of “tears”, but certainly to the point of frustration. Contributing to the frustration is that issues with a multi-tier application almost always coincide with some level of executive interaction, a subsequent war room (link to my article on War Room ), and a negative affect on your company’s overall business. The negative effects can impact a wide range of things including revenue, productivity, customer loyalty, product shipment, critical services, etc.

Greeeaaaaaatttttttt ….. this Sounds Fun

Not a pretty picture nor a fun situation for anyone in IT obviously, so what can you do? Well, we like to use the phrase of “putting probability on your side”, and that involves a strategy for monitoring the application from a service perspective. What does a “service perspective” mean with respect to a multi-tier application? It means that you look at the whole enchilada as they say for all pieces of the multi-tier application that can impact it as a service. I have seen customers wind up frustrated by attempting to address this issue with a component management approach. Examples of this approach monitoring layer 2 network utilization on high value links, or simple server monitoring such as server disk space, CPU, memory, processes, etc. These are great metrics to collect regarding the performance of your individual servers, and I would completely agree that this is useful information. The frustration lies in that this approach can tell you that your server environment is working “just fine” from a device perspective, yet … you still have an angry multi-tier application community of users.

Another approach can be to use synthetic agents that run synthetic transaction tests against your network, servers, and applications. Again, I completely agree that this information can be useful and definitely has its place of value. Where it can be frustrating is overall management and administration of the synthetic testing environment on a grand scale: Changes in application code, transaction flows, sheet number of servers, virtualized servers deployments, or when applications receive upgrades. The other challenge for this type of deployment can be seeing the traffic that is “not necessarily part of the multi-tier application”, yet they are very much service impacting. The goal here is not to start an argument on architecture or approach … but to offer up another alternative.

What in the World is Service Triage?

We use this term again as an approach to monitor the application from a “service” perspective. This means looking at the pieces and parts of the network, application, database, web servers, virtualized servers, key service enablers, etc. that make up the “service”. The Service Triage approach let’s you look at the overall health of the system, not simply focus on a particular transaction. Customers that have leveraged this approach, have seen dramatic reductions of service downtime and poor performance. So let’s break this down from a high level approach.

Service Enablers (DHCP, DNS, LDAP, RADIUS,etc.)

So many times customers will focus on the main parts of the application itself (front end – app – DB), yet forget about these critical service enablers that traverse the logical network. If you think about it, if these protocols are not properly doing their respective role, it WILL affect the multi-tier application itself. One area that I find to be a huge contributor to frustration is DNS itself. I am not referring to DNS only as an all encompassing protocol itself, but also the actual individual DNS name calls within the multi-tier application environment. It can be the easiest thing to triage and identify, but often times the last item investigated.

Why is this Valuable? If the front end of your application call starts with a client DNS call to “webserver1.company.com” and DNS cannot properly resolve the name for whatever reason, what happens? After DNS times out, and then requests the same address from a different server, it will fail again. If the specific DNS calls within the application environment are failing, each time this happens, your frustrated non-technical user only sees delays, latency, slowdowns, and “stuff not working”.

Front End (Citrix, Web Server Farm, etc.)

After name resolution and authentication, the next hop in the application is usually the front end of the multi-tier application. Often times this tier is served up by Citrix, HTTP, or HTTPS servers to make the calls to the actual application server. When these protocols are experiencing issues (i.e, “http 404 errors – URL not found”), they can be difficult to locate and find depending on your chosen method for instrumentation.

Why is this Valuable? The key is to identify and triage the issue as quickly as possible. If you can determine which part of your “service” is experiencing error conditions, you are on your way to problem resolution. The ability to isolate errors down to the protocol level only helps to quicken the triage process.

Application and Virtualized Servers

This is often the place where application triage starts, and that is not a bad thing, (assuming that this is where the actual problem resides). With the advent of blade servers, load balancers and virtualized servers in most customer environments, determining application latency is key information.

Why is this Valuable? Identifying items such as TCP resets, session loads, latency, proper load balancing can be great indicators of performance issue at this tier. If you can locate a specific error condition quickly between virtualized servers, or a Virtual IP running on a blade server with 20 guests running on that server, you have covered a lot of ground in terms of getting to problem isolation.

Database and Servers

Most commercial databases will provide error conditions internal to their performance. What sometimes is a surprise to application managers is that these error conditions can be exposed and identified in their network and/or heartbeat conditions.

Why is this Valuable? Most database administrators will leverage a database spy or performance agent in this tier. Leveraging your APM/NPM solution to complement internal system tools by locating DB errors, latency, TCP level errors, only reduces the time overall time to get to a probable cause at this tier. Again, the name of the game is service triage and to do it FAST.

Printing and File Server Storage or Denial of Service

Usually a lesser priority inside of a multi-tier application environment, yet still particularly difficult to identify when there are performance issues. Think of the situation where the actual multi-tier ERP is working perfectly, yet an end-user that needs to print out shipping information … but cannot.

Why is this valuable? A quicker problem isolation leads to a quicker resolution. Often times, servers and protocols that are NOT considered to be part of the multi-tier application ecosystem, in fact … have some type of dependency to it. I have seen situations where a windows server storage error or an outside Denial of Service attack also impact a multi-tier application performance. If you think on the perspective of seeing all the pieces that can impact a service, you quickly see why this approach is so valuable.

Points to Ponder

- What is your best example of a complicated multi-tier application?

- What has been your most difficult war story in finding an associated performance issue inside the environment?

Pingback: You Can't Handle the DNS Truth - Problem Solver Blog

Pingback: Old MacDonald had a Farm .. E-I-E-IoT - Problem Solver Blog